Hey, I'm Om

I'm a CS student at UT Austin, passionate about building solutions to real problems.

I'm a CS student at UT Austin, passionate about building solutions to real problems.

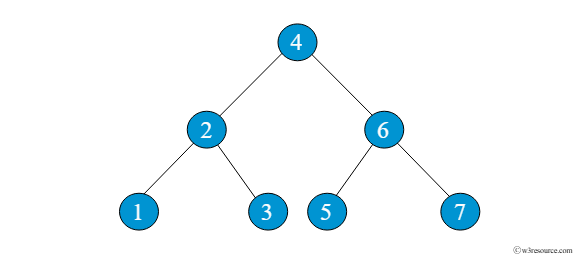

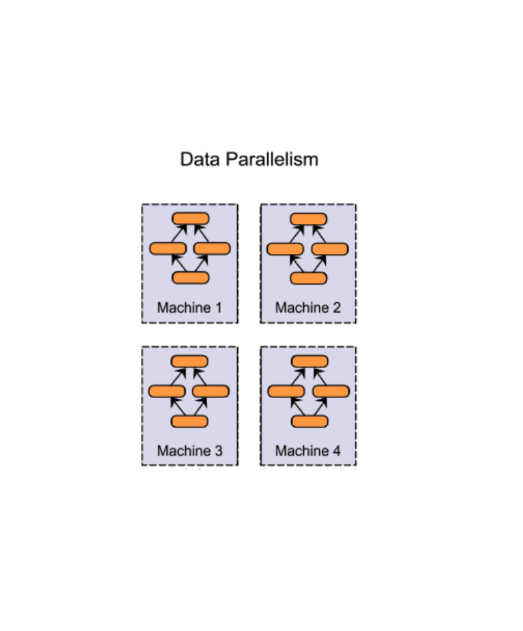

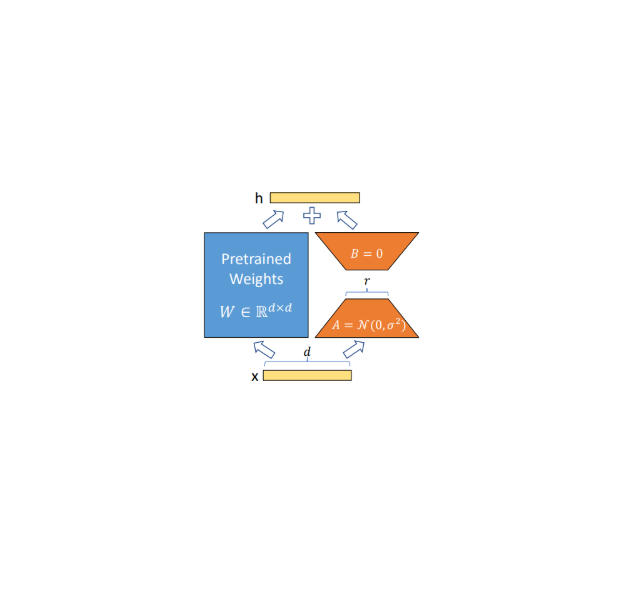

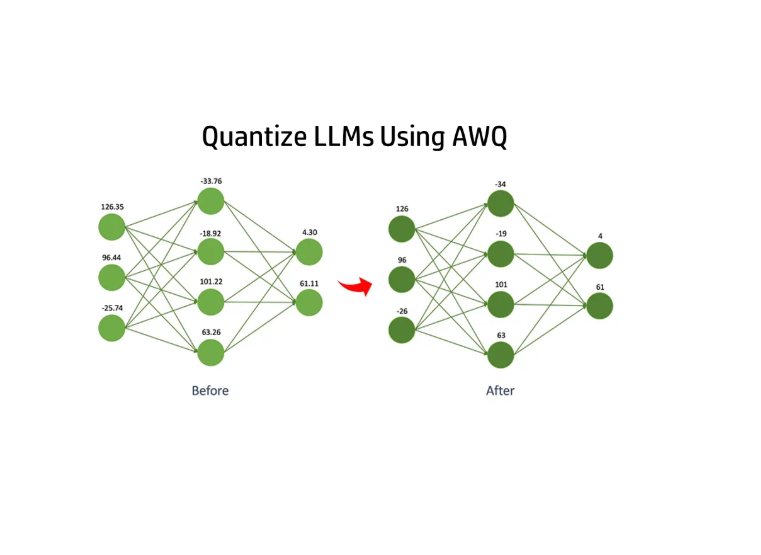

Full reproduction of the 124M parameter GPT-2 from scratch: built the transformer (blocks, MLP, causal multi-headed self-attention), loaded released weights to verify correctness, then trained from scratch with bfloat16, torch.compile, FlashAttention, DDP across 4 A100 GPUs. Trained on FineWeb-Edu 10B; evaluated on HellaSwag. Our model beats the reference GPT-2 124M eval score.

Worked through Andrej Karpathy's Zero to Hero series, coding each lecture rather than just watching, and documented the journey in a technical blog. Built the language model stack from first principles: autograd engine, bigram character-level model, MLP, batch normalization, WaveNet-inspired model, character-level transformer, BPE tokenizer, and finally GPT-2 pretrained on 4 A100 GPUs.

A Git-like chat session management tool for coding agents. Implementation available as an open-source MCP server, with a modified mini-swe-agent integration for benchmarking.